The Importance of Copyediting and Proofreading

February 27, 2024

Importing Excel Files in MVC Core with NPOI Mapper and Bulk Copy (Without Excel on the Server)

A common requirement in our custom Web Applications is the ability to upload an Excel File into the database. There are several ways to accomplish this, but many of those required having Excel already installed in the server. This article will introduce a way to meet the requirements without having to burn an Excel license and along the way, we will learn about a new library and reacquaint ourselves with an old one.

This article already assumes that you know the ins and outs of uploading files, but if you are looking for information on that, Microsoft has you covered: https://bit.ly/2MKOfvr.

Using NPOI Mapper

Apache POI is a Java based library used for reading and writing Microsoft Office Formats. The library was ported over to .NET as NPOI (https://bit.ly/2t2aVyg). The library is extremely powerful, but often feels a bit heavy handed, especially if you are “just” taking data from one bucket and pouring it into another. That’s where NPOI Mapper comes into play (https://bit.ly/2G36KKZ). This library allows you to map an Excel File onto a C# class by convention, attribute mapping or by a robust mapping syntax. If you have ever used AutoMapper, then you get the where this library is coming from.

To get started, add NPOI.Mapper from NuGet:

Install-Package Npoi.Mapper

Mapping Data to Class Properties

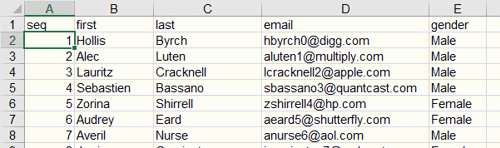

The data in the Excel file contains the following data:

NPOI.Mapper provides custom attributes that you can use to map the Headers in row 1 to the properties of your class:

public class UploadData

{

[Ignore]

public int Id { get; set; }

[Column("seq")]

public int AlternateKey { get; set; }

[Column("first")]

public string FirstName { get; set; }

[Column("last")]

public string LastName { get; set; }

[Column("email")]

public string Email { get; set; }

[Column("gender")]

public string Gender { get; set; }

}

One of the shortcomings of NPOI.Mapper that I have found is that the first row in your Excel sheet must be the Header Row. There is a “todo” listed in the code to implement a Row Offset, but, that has not been implemented as of this article.

There is also an option to use a Fluent Based Syntax to perform these mappings. This option is helpful when you need to combine data from multiple columns into one property or if you have to do any type casting.

Once the mappings have been defined, the data can be transferred with the following two lines of code:

Mapper mapper = new Mapper(fileInfo.OpenReadStream());

IEnumerable<UploadData> data = mapper.Take<UploadData>("data").Select(x => x.Value);

The Mapper object conveniently takes in a stream object which we can obtain from the IFormFile property that MVC uses when uploading files. The Mapper’s Take method takes the Type you are mapping onto (in this case, our UploadData class) and the Worksheet containing the data (this can either be the sheet number or its name). I’ve added the select statement to retrieve the values.

Saving the Data: Part One – The First Approach

The UploadData class is also the DBSet that I configured with Entity Framework. My first approach was to simply take the results of the mapping, add it to the DbContext and then save the changes:

Mapper mapper = new Mapper(fileInfo.OpenReadStream());

IEnumerable<UploadData> data = mapper.Take<UploadData>("data").Select(x => x.Value);

await _dbContext.UploadData.AddRangeAsync(data);

await _dbContext.SaveChangesAsync();

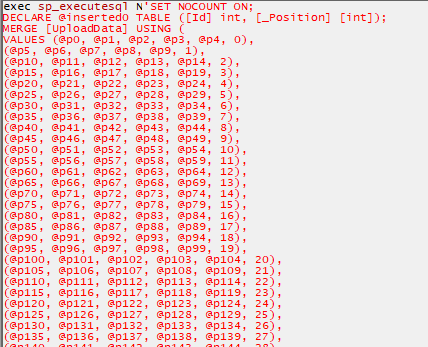

This works great with small sets of data; however, larger sets take much longer. That’s because Entity Framework will submit all the changes to the server in one go, but will generate a large insert statement and pass the values in as parameters:

To trim down the time spent on Inserts, I turned to an old reliable friend: Bulk Insert.

Using Bulk Insert

It would have been nice if Entity Framework provided a better bulk update story. But we can turn to ADO.NET which provides the SqlBulkCopy object (https://bit.ly/2G5awn9). There are two hurdles that we have to clear in order to use SqlBulkCopy: the first is that we need to convert our IEnumerable into a DataTable and the second is that we need to create a mapping between the destination table and the columns in the DataTable.

The first hurdle is cleared with a custom extension method that uses reflection to get the property names and the underlying data type. The convention in play here is that the name of our properties matches the name of the columns in the database:

public static DataTable ToDataTable<T>(this IEnumerable<T> data)

{

var propertyInfos = typeof(T).GetProperties();

DataTable table = new DataTable();

foreach (var property in propertyInfos)

{

table.Columns.Add(property.Name);

}

foreach (T item in data)

{

var newRow = table.NewRow();

int column = 0;

foreach (var property in propertyInfos)

{

var value = property.PropertyType.IsEnum

? Convert.ChangeType(property.GetValue(item, null), Enum.GetUnderlyingType(property.PropertyType))

: property.GetValue(item, null);

newRow[column] = value;

column++;

}

table.Rows.Add(newRow);

}

return table;

}

Once we have the DataTable, we can get the schema data for the destination table and then iterate over them to create the mappings:

var bulkCopy = new SqlBulkCopy(connection) {DestinationTableName = "UploadData"};

var schema = connection.GetSchema("Columns", new[] {null, null, "UploadData", null});

// Create Column Mappings.

foreach (DataColumn sourceColumn in dt.Columns)

{

foreach (DataRow row in schema.Rows)

if (string.Equals(sourceColumn.ColumnName, (string) row["COLUMN_NAME"],

StringComparison.OrdinalIgnoreCase))

bulkCopy.ColumnMappings

.Add(sourceColumn.ColumnName, (string) row["COLUMN_NAME"]);

}

bulkCopy.WriteToServer(dt);

The final line executes the BulkCopy.

Wrapping up

This article introduced a few tools that I have found to be very helpful when having to upload and parse excel files. This technique can work in Web based projects or Windows based projects. A few considerations do need to be made when implementing any file upload mechanism in the web. Chief among those are file upload limitations. ASP.NET Core has a default limiter that can be overridden, but it is recommend considering streaming the upload instead. There is more work involved, but it tends to be more flexible. I hope you have found this article helpful. You can find the source code I used for this demo over on GitHub: https://bit.ly/2sYxJyK.